Modern casino platforms run under real-money, real-time pressure — low latency gameplay, atomic wallet transactions, certified RNG, regulatory audit logs, and traffic spikes that cannot be predicted. This guide covers the backend architecture principles that matter most when building a reliable, scalable, and audit-ready casino platform, with deep-dive guides for each component area.

In this guide — 10 casino backend architecture topics

1. Monolith vs Microservices — Choosing the Right Paradigm

Most casino platforms start as monoliths and evolve toward microservices as scale, team size, and feature complexity grow. Neither is universally correct — the right choice depends on where your platform is in its lifecycle, how large your engineering team is, and what your compliance requirements demand.

A well-designed monolith is a legitimate starting point for early-stage platforms. It is easier to develop, test, deploy, and debug than a microservices system of equivalent complexity. The operational overhead of managing dozens of services, distributed tracing, and service-to-service authentication is significant — and not justified until the team has the scale to absorb it.

When microservices make sense in casino architecture, the boundaries should reflect domain ownership, not technical layers. Separate services for: authentication and identity, payment processing and ledger, RNG and game logic, session management, fraud and risk, analytics, and communication. Each service owns its data store — shared databases between services are the most common cause of microservices coupling that defeats the purpose of the architecture.

Key practices

- Separate services by business domain: authentication, payments, RNG, game logic, analytics — not by technical layer

- Use Kubernetes for container orchestration and service isolation; Helm for deployment management

- Adopt

gRPCfor internal service communication and REST for external-facing APIs - Use a service mesh (Istio or Linkerd) when traffic management, mTLS, and observability across services become complex

- Each service must be independently deployable and independently scalable — if two services must always deploy together, they are one service

- Plan for the strangler fig migration path when moving from monolith — never attempt a big-bang rewrite of a live casino platform

2. Random Number Generator (RNG) Service Design

The RNG service is the most integrity-sensitive component in a casino backend. Its design determines whether game outcomes are provably fair, auditable by regulators, and resistant to manipulation. Unlike most backend services where correctness means "produces the right output," RNG correctness means "produces unpredictable output that can be independently verified and cannot be predicted in advance."

Cryptographically secure pseudorandom number generators (CSPRNGs) are the baseline requirement. Weak PRNGs — including most standard library random functions — are not suitable for real-money gaming. The RNG service must be isolated from other services, produce tamper-evident logs of every seed and output, and support periodic re-seeding from high-entropy sources.

Provably fair mechanisms, common in cryptocurrency casino models, allow players to independently verify outcomes using a commit-reveal scheme: the server seed is hashed and committed before the game, the player provides a client seed, and both are combined to generate the outcome. The server seed is revealed after the round closes. This approach is architecturally sound and increasingly expected by technically sophisticated players even in traditional markets.

Key practices

- Use CSPRNGs — Fortuna, ChaCha20, or equivalent — never standard library

Math.random()or equivalent for game outcomes - Combine client and server seeds for provably fair number generation where applicable

- Use HMAC-SHA256 or equivalent for secure hash verification of seed commitments

- Implement periodic re-seeding from hardware entropy sources or trusted entropy APIs

- Produce tamper-evident logs of every seed input, output, and re-seeding event

- Design for independent certification — maintain versioned game logic with controlled releases that can be reproduced during regulatory audit

3. Real-Time Game State Synchronisation

State synchronisation is the backend challenge that determines whether multiplayer casino games feel fair. If one player in a poker game sees a card before another, or if a roulette wheel result arrives 200ms later on one device than another, the game is broken regardless of whether the underlying math is correct. The challenge is compounding: thousands of concurrent sessions, each with its own state, over unreliable mobile connections with variable latency.

WebSockets provide the persistent connection that makes real-time state delivery possible — HTTP polling is not viable for casino games with sub-second update requirements. For the event propagation layer, Kafka or NATS provide durable, ordered message delivery that survives connection interruptions and supports reconnection replay. When a player disconnects and reconnects mid-round, they replay missed events from their last acknowledged offset and rejoin a consistent state.

Race conditions are a specific risk in multiplayer games where concurrent player actions must be applied in a deterministic order. Vector clocks, optimistic locking, or actor-model concurrency approaches each address this differently — the right choice depends on your game types and consistency requirements. Event sourcing, where game state is reconstructed from an ordered log of events rather than maintained as mutable state, provides both auditability and strong consistency guarantees at the cost of higher storage and query complexity.

Key practices

- Use WebSockets or Socket.IO for persistent real-time connections — not long-polling

- Use Kafka or NATS for async game-event propagation with offset-based replay for reconnecting clients

- Apply event sourcing or versioned state snapshots where consistency requirements are highest

- Detect and resolve race conditions in concurrent environments explicitly — do not rely on database-level locking as your only defence

- Add rollback mechanisms to correct state inconsistencies when detected

- Deploy edge servers near player regions to reduce geographic latency — target under 100ms round-trip for game state updates

4. Scalable Wallet and Transaction Ledgering

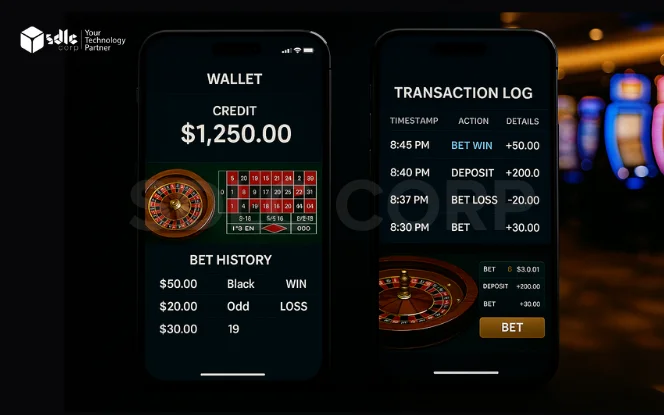

The wallet and ledger are the financial heart of a casino backend. Every bet, win, bonus, deposit, and withdrawal flows through them. Unlike most application data, financial ledger data has zero tolerance for inconsistency — a double credit, a missed settlement, or a corrupted balance is not a bug that can be fixed with a rollback. It is a regulatory incident, a player dispute, and a reconciliation failure.

The wallet service must implement ACID transactions for every balance-affecting operation. Two-phase commit or SAGA patterns coordinate transactions that span multiple services — for example, a withdrawal that touches the wallet service, the payment service, and the audit log simultaneously. The SAGA pattern is preferred for long-running transactions because it avoids distributed locking: each step produces a compensating action that can be executed if the step fails, keeping all systems consistent through explicit rollback logic rather than held locks.

The ledger must be append-only and immutable. No balance update should ever modify an existing ledger entry — every transaction creates a new entry with a timestamp, transaction ID, player ID, amount, type, and resulting balance. This structure supports full audit replay, regulatory inspection, and dispute resolution without requiring snapshots or destructive updates.

Key practices

- Use SAGA pattern for distributed transaction coordination across wallet, payment, and audit services

- Maintain an immutable, append-only ledger — never update existing transaction records

- Implement idempotency keys on every wallet call — provider retries and network failures must not create duplicate credits

- Separate transaction processing from ledger write paths — high-volume game events should not block financial settlement

- Run daily three-way reconciliation: wallet ledger vs payment processor vs bank settlement

- Use CockroachDB, Vitess, or ScyllaDB for distributed wallet storage where multi-region resilience is required

5. Anti-Fraud and Anomaly Detection Layer

Casino platforms face fraud patterns that are distinct from general e-commerce fraud. Bonus abuse at registration — creating multiple accounts to claim welcome bonuses — is the highest-volume fraud type by case count. Chip dumping in poker, where colluding players transfer chips to a nominated account, is harder to detect but higher-value per case. Payment fraud through stolen cards is a shared challenge with e-commerce but requires casino-specific models because gaming transaction patterns look unusual to general-purpose fraud systems.

Real-time fraud detection requires stream processing — Kafka Streams, Apache Flink, or equivalent — rather than batch analysis. By the time a nightly fraud report identifies a bonus abuse ring, hundreds of accounts may have been created. The detection must happen at the point of registration, deposit, or first withdrawal for it to prevent loss rather than simply identify it after the fact.

Machine learning models improve fraud detection accuracy over rule-based systems but introduce the challenge of explainability. Regulators and internal audit teams require understanding of why specific players were flagged or restricted. Model decisions must produce human-readable outputs, and the version of the model active at the time of each decision must be logged alongside the decision for reproducibility.

Key practices

- Analyse behavioural patterns: bet timing, transaction velocity, device fingerprint, IP history, and session patterns

- Use Apache Flink or Kafka Streams for real-time stream processing — batch fraud detection is too slow for prevention

- Implement ML anomaly detection for player segmentation and unusual pattern identification

- Log rule version and score with every fraud decision — required for audit trail and model governance

- Maintain separate review queues by fraud category — bonus abuse, payment fraud, and collusion require different analyst skills

- Monitor false positive rates by segment — over-blocking costs as much as under-blocking for LTV

6. Session Management and Security Hardening

Casino session management must satisfy two competing requirements: security hardening (preventing account takeover, session hijacking, and credential theft) and low friction for legitimate players (seamless re-authentication, device persistence, and uninterrupted gameplay across connection drops). The tension between these requirements is resolved through layered session design rather than choosing between them.

Short-lived access tokens combined with longer-lived refresh tokens is the standard pattern. Access tokens (JWT or opaque, depending on your validation model) are issued on authentication and expire within minutes to hours. Refresh tokens are stored server-side with revocation capability, enabling forced logout of compromised sessions. All session tokens must be tied to device and IP at issuance, with step-up authentication required for high-risk actions such as withdrawal or account detail changes.

Redis is the standard choice for session storage — sub-millisecond reads, TTL-based expiry, and horizontal scaling to handle concurrent session load. Redis Cluster handles thousands of simultaneous active sessions with consistent latency, and active-active Redis configurations provide session persistence across regional failovers. The session data model must be lean — store session metadata, not game state, which belongs in the game service.

Key practices

- Use short-lived access tokens plus server-side revocable refresh tokens — not long-lived JWTs

- Store sessions in Redis with explicit TTL; maintain a revocation list for force-logout on compromise

- Require step-up authentication for withdrawals, account detail changes, and high-value actions

- Bind tokens to device fingerprint and IP at issuance; flag anomalous re-use patterns

- Implement all authentication flows against OWASP Top Ten vulnerability patterns

- Maintain load-balanced session affinity for in-progress game rounds; never lose a live session to a deployment event

7. High Availability and Disaster Recovery Architecture

Casino platforms operate on real money with regulatory uptime obligations and players in multiple time zones. A 3am maintenance window is peak traffic for players in Asia, and a regulatory market like the UK requires documented uptime and incident reporting. Designing for high availability is not optional — it is a licence condition in most regulated jurisdictions.

The choice between active-active and active-passive disaster recovery depends on your Recovery Time Objective (RTO) and Recovery Point Objective (RPO). Active-active — where traffic runs across multiple regions simultaneously — provides near-zero RTO and RPO but at significantly higher infrastructure and complexity cost. Active-passive — where a secondary region is warm but not serving traffic — provides lower cost with RTO typically measured in minutes rather than seconds. Most casino platforms use active-active for their core payment and session services and active-passive for lower-criticality services.

Chaos engineering — deliberately injecting failures into production systems during planned exercises — is the only reliable way to verify that your DR architecture actually works. Simulating zone failure, database leader failure, and third-party provider unavailability in a controlled drill environment reveals failure modes that tabletop exercises miss. AWS GameDay and Netflix-style chaos injection are well-documented approaches applicable to casino infrastructure.

Key practices

- Deploy across multiple cloud regions and availability zones — never rely on single-region or single-AZ for production

- Define explicit RTO and RPO targets per service tier; match DR architecture to those targets

- Use global load balancing with health check-based failover — player traffic routes away from degraded regions automatically

- Test failover under load, not just in idle environments — failover behaviour under 10,000 concurrent sessions is different from idle

- Run chaos engineering exercises quarterly; document results and required remediations

- Maintain a runbook per failure scenario — zone failure, database leader failure, payment provider outage — that on-call engineers can execute under pressure

8. Regulatory Compliance and Auditing Systems

Compliance is an architectural constraint, not a feature you add before a regulatory audit. The difference between platforms that pass regulatory inspection and those that fail is almost always whether audit controls were designed into the system or retrofitted after the core platform was built.

WORM (Write Once Read Many) storage is the regulatory standard for audit logs in gambling markets that require tamper-evident records. This applies to game round outcomes, wallet transactions, player account changes, and administrative actions. AWS S3 Object Lock, Azure Immutable Blob Storage, or equivalent on-premises WORM systems all satisfy this requirement. Retention periods are jurisdiction-specific — typically 5 to 7 years — and must be enforced through storage policy, not just application logic.

Compliance by design also means separating game math and RNG logic from the client and treating it as a controlled backend component with versioned releases. Regulators require the ability to reproduce a specific game outcome from a specific session — which requires that the RNG seed, client seed, game version, and outcome are all logged with immutable timestamps and are queryable for investigation.

Key practices

- Use WORM storage for all audit logs — game rounds, wallet transactions, account changes, and admin actions

- Maintain timestamped, immutable audit trails for every player-affecting and system-affecting event

- Stream compliance logs into ELK (Elasticsearch, Logstash, Kibana) or equivalent for queryable audit replay

- Implement PCI-DSS scope reduction through tokenisation and hosted payment fields

- Design sandbox environments for audit replay — regulators may request outcome reconstruction from historical sessions

- Align GDPR data handling with your processing register; implement right-to-erasure workflows that cascade across all data stores

9. Analytics and Business Intelligence Integration

Casino analytics splits into two distinct domains that require different tooling and different access patterns: product analytics (player behaviour, session patterns, game performance, funnel metrics) and financial analytics (GGR, RTP reconciliation, bonus cost, payment settlement). Mixing these in a single data store or pipeline creates both operational confusion and compliance risk — financial data has retention requirements and access controls that do not apply to behavioural data.

The event streaming layer is the foundation of both. Every game round, every bet, every session start and end, and every payment event should produce a structured analytics event within seconds of occurrence. Kafka or Apache Pulsar are the standard choices for this streaming layer — they provide durable, replayable event logs that both real-time dashboards and batch analytical jobs can consume independently.

For storage and querying, columnar analytical databases — Snowflake, ClickHouse, BigQuery — outperform traditional relational databases for the aggregation queries that BI dashboards require. A typical casino GGR report aggregates millions of round events by game, provider, player segment, and time period. Row-store databases struggle with this query pattern; columnar stores handle it in seconds.

Key practices

- Stream telemetry from game services using Kafka or Pulsar — define event schemas before the integration, not after

- Separate product analytics and financial analytics — different access controls, retention policies, and query patterns

- Store structured event data in ClickHouse, Snowflake, or BigQuery for analytical query performance

- Track core KPIs at game-round level: RTP per game, DAU, LTV, ARPU, bonus cost, session duration

- Use A/B testing infrastructure tied to the analytics pipeline — measure the impact of game changes on retention and revenue

- Build real-time dashboards for operations teams — settlement drift, open rounds, error rate spikes need minute-level visibility, not daily reports

10. CI/CD and Observability at Scale

Shipping changes to a live casino platform is a high-risk operation. A deployment that introduces a game logic bug or breaks a wallet callback does not fail silently — it produces incorrect game outcomes or financial discrepancies that require manual investigation, customer communication, and potentially regulatory notification. The engineering answer is not to deploy less frequently — it is to make each deployment safer through automation, progressive rollout, and observability that detects problems before they affect significant player populations.

Canary deployments and feature flags are the standard tools for progressive rollout in casino systems. A new game version is deployed to 1% of traffic, monitored for anomalous error rates or RTP deviation, and expanded gradually if metrics remain within bounds. Feature flags allow game logic changes to be enabled and disabled without deployment — critical for rapid response when an issue is discovered post-launch.

Distributed tracing is non-negotiable in a microservices casino architecture. When a player raises a dispute about a game outcome, you need to trace the exact execution path — from the frontend request through the API gateway, session service, game service, RNG service, wallet service, and audit log — within minutes, not hours. OpenTelemetry has emerged as the standard instrumentation layer that works across services regardless of language, framework, or cloud provider.

Key practices

- Automate build, test, and deployment with GitHub Actions, GitLab CI, or equivalent — no manual production deployments

- Use ArgoCD for declarative GitOps deployment and Argo Rollouts for canary and blue-green deployment strategies

- Implement distributed tracing with OpenTelemetry across all services from day one — retrofitting tracing is expensive

- Use Prometheus and Grafana (or equivalent) for infrastructure and application metrics with SLO-based alerting

- Monitor game-specific metrics in observability stack: RTP drift, round settlement latency, rollback rate, error rate per game

- Maintain feature flags for all significant game logic changes — enables instant rollback without deployment

Frequently Asked Questions

Common questions on casino game backend architecture — design decisions, compliance requirements, and technology choices.

Casino game backend architecture is the design of the server-side systems that power online casino platforms. It covers the services, databases, messaging systems, and integration patterns responsible for game logic, RNG fairness, wallet and ledger management, session handling, fraud detection, compliance auditing, analytics, and high availability. Unlike most web application backends, casino backends must meet strict requirements for financial accuracy, regulatory auditability, real-time latency, and certified fairness.

Most casino platforms benefit from starting with a well-structured monolith and migrating toward microservices as team size, feature complexity, and scale justify the operational overhead. A monolith is easier to develop, test, and debug. Microservices become valuable when separate domains need independent scaling, independent deployment, or strong fault isolation. Domain boundaries should reflect business ownership: authentication, payments, RNG, game logic, session management, fraud, and analytics are appropriate microservice boundaries. Shared databases between services defeat the purpose of the architecture.

Regulated casino backends require cryptographically secure pseudorandom number generators (CSPRNGs) such as Fortuna or ChaCha20. Standard library random functions are not acceptable for real-money gaming. The RNG service must produce tamper-evident logs of every seed and output, support periodic re-seeding from high-entropy sources, and be independently certifiable. Provably fair mechanisms, which allow outcome verification using client-server seed commitment schemes, add an additional layer of player-verifiable fairness beyond regulatory minimum requirements.

Casino platforms handle peak traffic through horizontal scaling, queue-based load levelling, and pre-scaling for predictable events. Kubernetes horizontal pod autoscaling responds to real-time load increases. Event-driven architecture with Kafka decouples game event production from processing, preventing overload cascades. For predictable spikes during major sporting events or tournaments, pre-scale the session and game services before traffic arrives. Circuit breakers prevent degraded third-party services from consuming threads needed by healthy services.

Casino wallet services must implement ACID transactions for every balance-affecting operation. The ledger must be append-only and immutable — no existing entry is ever modified. Idempotency keys on every wallet API call prevent duplicate credits from retries. The SAGA pattern coordinates transactions that span multiple services. Daily three-way reconciliation between the wallet ledger, payment processor records, and bank settlement is a minimum operational requirement. Distributed databases like CockroachDB or ScyllaDB provide multi-region resilience for platforms operating across multiple jurisdictions.

Regulated casino backends must maintain WORM (Write Once Read Many) audit logs for game rounds, wallet transactions, and account changes. Retention periods are jurisdiction-specific, typically 5 to 7 years. PCI-DSS scope reduction through tokenisation is required for platforms handling card data. GDPR requires Data Processing Agreements with every vendor processing EU player data, right-to-erasure workflows that cascade across all data stores, and documented data residency for player data. Game math and RNG logic must be versioned and reproducible for regulatory audit.

Casino backends typically use Prometheus and Grafana for metrics and alerting, OpenTelemetry for distributed tracing across microservices, and ELK (Elasticsearch, Logstash, Kibana) or similar for log aggregation and compliance audit replay. Game-specific metrics — RTP drift per game, round settlement latency, rollback rate, error rate per provider — should be tracked alongside infrastructure metrics. Distributed tracing is essential for dispute resolution: every player complaint about a game outcome requires tracing the full execution path within minutes.

Casino fraud detection uses real-time stream processing with Apache Flink or Kafka Streams to analyse behavioural patterns at the point of registration, deposit, and withdrawal. Machine learning models improve detection accuracy over rule-based systems for complex patterns like collusion and chip dumping. Every fraud decision must log the rule version and risk score that triggered it for audit trail purposes. Separate review queues by fraud category are recommended, as bonus abuse, payment fraud, and collusion require different analyst expertise. False positive monitoring is as important as detection accuracy.

Casino platforms require multi-region, multi-availability-zone deployment with defined RTO and RPO targets per service tier. Core payment and session services typically warrant active-active configurations for near-zero RTO. Lower-criticality services can use active-passive configurations. Chaos engineering exercises — deliberately injecting zone failures, database leader failures, and third-party provider outages — are required to verify DR architecture works under load. Per-incident runbooks for each failure scenario are essential for on-call engineers working under pressure.

Casino platform deployments require automated build, test, and deployment pipelines with no manual production deployments. Canary deployments — rolling out to 1-5% of traffic with automated rollback triggers — prevent game logic bugs from affecting large player populations. Feature flags allow game changes to be enabled and disabled without deployment. GitOps with ArgoCD provides declarative, auditable deployment history that can be inspected during regulatory review. All deployments should monitor game-specific metrics (RTP, error rate, rollback rate) automatically during and after rollout.

Building a casino backend that needs to scale?

SDLC Corp designs and builds casino backends with correct wallet architecture, certified RNG, audit-ready compliance, and observability from day one.

Contact Us

Share a few details about your project, and we’ll get back to you soon.

Let's Talk About Your Project

- Free Consultation

- 24/7 Experts Support

- On-Time Delivery

- sales@sdlccorp.com

- +1(510-630-6507)